There are no firm guidelines for the responsible use of Artificial Intelligence (AI).

By Matt Lowe, Chief Product and Marketing Officer, MasterControl

Technology moves so quickly that sometimes we don’t consider the implications of something until well after it’s created. Artificial intelligence (AI) is one such example. Spending on AI is projected to hit $110 billion annually by 2024,1 but there’s still debate on how to use it. We already interact with AI daily, whether that’s Siri, a chatbot, or viewing recommendations on Netflix. Despite these common uses, there are no firm guidelines in place for the responsible use of AI.

Responsible AI won’t necessarily be the same across industries. The concerns of AI applications in clinical trials won’t necessarily be the same as those in manufacturing. However, since manufacturing is more suited to automation, the worry that AI will replace humans is justified. The unique ethics of AI in manufacturing, the risks it presents, and how to mitigate those are questions the industry should be addressing now.

Technology has a history of eliminating jobs, so it’s no wonder there’s concern about AI replacing humans. Where the technology currently exists to completely remove humans from the manufacturing floor, this has already happened. The ethics of this are questionable, because if you take humans out of the equation, you also remove human innovation and the ability to spot where AI may have gotten it terribly wrong, both of which are vital to manufacturing.

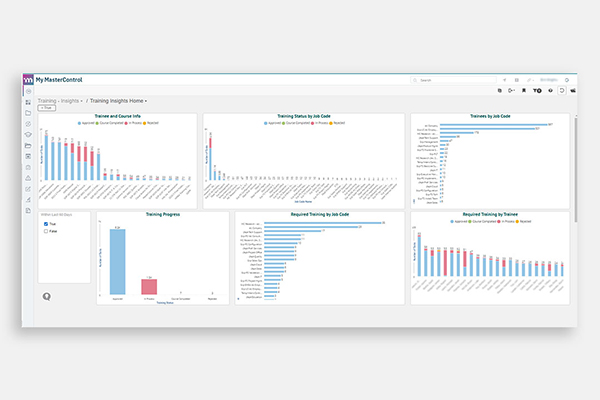

Instead of removing humans, we should look to augment them with appropriate uses of AI. An appropriate use automates a tedious task or drives data consistency to improve efficiency. AI takes on the parts of work that are non-value added or contribute to data inconsistency. Those tasks include much of the data tracking and analysis that is currently done by hand. This frees up workers to focus on other responsibilities.2

An example of this is training. In manufacturing, it isn’t enough to be trained — you have to be trained effectively. An unclear work instruction (WI) or standard operating procedure (SOP) can cause multiple line operators to struggle. Knowing about and fixing that WI or SOP would save time and money, but it is difficult and slow to identify these issues without the use of AI or advanced analytics.

Identifying and properly using your best employees is also a job for AI. There’s obvious ROI in being able to do that, but manufacturers have to be sure their AI is performing fairly and without bias when it comes to employees. This brings up the point of explainability in AI. It isn’t enough to tell an employee that they need to improve because the AI told you so. A high level of understanding of the data behind the AI is necessary.

Explainable AI is also important because it presents less risk. Understanding how the algorithm works and the data it uses lets you identify high-risk areas and take steps to control that risk. Not understanding the algorithm in and of itself is a risky situation. That’s why a robust AI training program based on accurate data is essential. Inaccurate data will lead to inaccurate conclusions. Good data handling practices can ensure data integrity is preserved.

The more automated data collection is, the more accurate the data. It isn’t enough to just have data. As Dimitri Laurent of 3B-Fibreglass put it, “AI isn’t a kind of magic that works by throwing data into algorithms.”3 The proper framework for AI requires digitization. This includes the production record on the manufacturing line. In organizations that record these on paper, a person writes down the information. If that data is needed later in electronic format, someone else enters it. Considering how many data points there are in a production record, that presents thousands of opportunities for error. That’s why interconnected systems that make everything digital are so important.

Unfortunately, even interconnected systems won’t protect you from biased data. Generally, this isn’t as big of an issue in manufacturing, but it can still be a problem. In response to a Pew Research Center survey, Brad Templeton wrote, “Yes, we will encode our biases into AI. At the same time, the great thing about computers is once you see a problem you can usually fix it.”4 It is possible to scrutinize data and algorithms to identify and eliminate bias. Which is the only way to have truly accurate AI.

It’s in the industry’s best interest to think about responsible AI now before its use becomes even more prevalent. A consensus on what constitutes ethical AI will ensure accuracy and fairness in its use. Manufacturers that embrace these practices will have the best of both worlds with the ingenuity that comes with human employees and the accuracy and computing power of AI.

Sources

Matt Lowe, chief product and marketing officer at MasterControl, provides a unified vision for the marketing and product teams. He brings a unique understanding of both product and customer knowledge to develop MasterControl’s go-to-market strategies and messaging. Lowe is a medical device expert with experience in product development and product management at Ortho Development Corp. and Bard Access Systems, a subsidiary of BD. Lowe has successfully launched more than a dozen medical devices. He has five patents issued and one pending. His regulatory experience includes writing a 510(k) that was cleared by the FDA and managing a multi-site, multi-year post-market clinical study for orthopedic devices.

Since joining MasterControl in 2006, he has held several executive roles in product, engineering, strategy, sales, and marketing. Lowe has a bachelor’s degree in mechanical engineering from the University of Utah and an MBA from Indiana University.

As manufacturers offer more customization than ever before, managing product complexity has become a critical challenge. Tune in with Dan Joe Barry, Vice President of Product Marketing at Configit, who explores how companies are tackling the growing number of product configurations across engineering, sales, manufacturing, and service. He explains how Configuration Lifecycle Management (CLM) helps organizations maintain a single source of truth for configuration data. The result: fewer errors, faster quoting, and the ability to deliver customized products at scale.