Problems, challenges and solutions encountered by manufacturing companies when it comes to handling data.

By Tim Hall, VP of Products at InfluxData

Data has long been treated in the manufacturing industry as the orphan nephew living in the cupboard under the stairs. While operational and service industries have leapt on the benefits of data as the catalyst of business growth and efficiency gains, the manufacturing sector has been slow to adopt the culture of becoming a data-driven business. According to Accenture, only 13% of manufacturing companies have seen through a digital transformation of their processes.

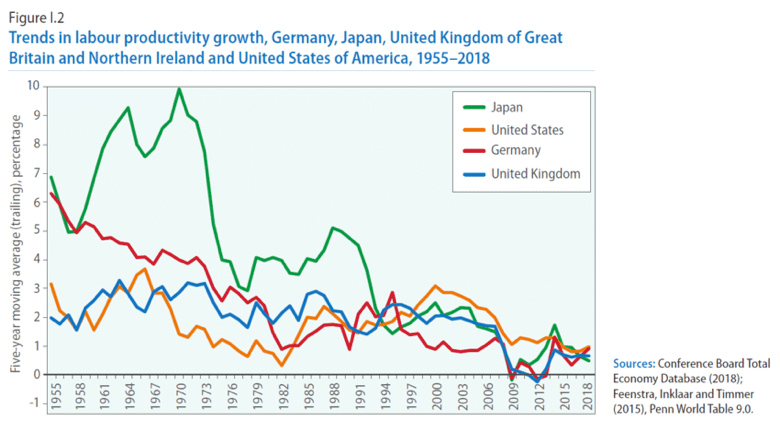

In many ways, the core approach to manufacturing has remained unchanged for the past 50 years despite the industry experimenting with off-shoring and integrated manufacturing in megafactories. While these have resulted in short-term costs savings (the real cost of labor having caught up in the off-shore location), it has not fueled a growth in labor productivity. The long-term trend has been a diminution in productivity growth that does not bode well for future generations.

Although coined as a phrase back in 2012, the buzzword of Industry 4.0 has gained increased currency in recent years with its promise to use the power of data to revolutionize manufacturing. Industry 4.0 represents the fourth revolution that has occurred in manufacturing. From the first industrial revolution (mechanization through water and steam power) to the mass production and assembly lines using electricity in the second, the fourth industrial revolution will take what was started in the third, with the adoption of computers and automation, and enhance it with smart and autonomous systems fueled by data and machine learning.

The big difference between Industry 4.0 versus Industry 3.0 is that while in the former computers were introduced to enhance existing processes, the latter seeks to reinvent the entire process around the power of data. But outside of the buzzwords, what does this mean in practice?

The road to establishing a data-driven manufacturing business encompasses three key steps:

In practice, this means a soup-to-nuts reassessment of the entire manufacturing process so automation in the form of cyber-physical systems and data exchange through IoT is in the core of the architecture of the manufacturing plant rather than an afterthought and bolt-on.

Traditionally, manufacturing technology has been split into operational versus information. Where operational technology (OT) has focused on the sensors and software that monitor and control the manufacturing process, information technology (IT) has provided the separate function of data processing and analytics. A way of looking at Industry 4.0 is that it marks the convergence of OT and IT and the real-time interdependence between the process and analytics.

But to achieve this convergence necessitates the reinvention of the manufacturing process with a data architecture that can ingest the huge volumes of real-time data generated by the IoT sensors and other devices, and enable the nano-second control of the entire environment. The critical issue here is time – and how all elements in the production process must adhere to and be controlled by the central management system.

The cornerstone of an advanced manufacturing plant is the central control system. Since time is the critical element in its operation, a time-series database offers by far the best route to providing this required precision.

The implementation of an Industry 4.0 manufacturing plant requires an adherence to data standards that can ensure the seamless flow of information.

In operation, the process applications backed by a time-series database deliver two critical services: keeping the production line running efficiently and minimizing downtime. Although these may sound like one and the same thing, they are very different in practice.

The efficiency of the production line comes down to the control and sequencing of events in the manufacturing process. This control requires the ingestion of huge amounts of data from a massively augmented array of sensors so that real-time instructions can be delivered to the cyber-physical systems and other aspects of the line. This necessitates a shift from legacy backend systems, that derive from the era of independent OT and IT systems, and the implementation of a time-series database architecture to accommodate the scale and precision required.

The minimization of the production line downtime is ensured through the analytics of the data to predict problems and equipment failures before they actually occur. Through this predictive failure analysis, the problems can be forestalled and actions taken to eliminate the risk of an unscheduled stoppage.

The role of a time-series database is to deliver three aspects:

Another key aspect is the handling of the manufacturing data and the need for scalability and open exchange.

The data generated from a manufacturing environment can be highly variable and unpredictable in its volume. The core time-series database needs to be able to both ingest the high throughput of data and sustain the real-time querying. If either of these fail, then the operational integrity of the production line could be compromised.

The open exchange of data is critical to the smooth operation of any advanced manufacturing process, but is critical in an Industry 4.0 environment. Here the entire process relies on the wide use of sensors to provide data that leads to real-time process adjustment. If an inappropriate data architecture is implemented this can lead to data silos where critical data required for the real-time process optimization is unavailable.

If we trust the research from Accenture, 87% of manufacturers have not yet implemented an Industry 4.0 approach. This means they are relying on post-event analytics to understand the efficiency of their operations with no ability to see real-time optimization and provide predictive failure intelligence.

For these manufacturers to make the transition to Industry 4.0 the key steps are:

Companies that take these steps will reap the benefit of predictive analytics where the real-time data can forecast when a line or component problem will arise and allow preemptive maintenance to solve the problem before it becomes a crisis. In industries such as pharmaceuticals, this capability is highly important because in the event of a production failure the entire production line has to be cleared with all products disposed of.

Through these steps companies can build a manufacturing process that is truly prescriptive. This may mean that a manufacturing process will adjust and optimize based on internal or external factors.

The inevitability is that data will now be the cornerstone strategy for any manufacturer considering a new plant or redeveloping an existing one. Before location, supply chain and floor plans are considered, the data architecture will be the central consideration of how the plant will operate to maximise throughput and maintain optimum efficiency. Through this approach, manufacturers will reap the rewards of more flexible manufacturing, process optimization, rapid scalability and predictive failure analysis to maintain near 100% uptime.

Data in manufacturing has travelled far from its days under the stairs and is set to be the wizard that shakes up industry and ushers in a new era of productivity growth.

Tim Hall

Tim Hall is VP of Products at InfluxData.

As manufacturers offer more customization than ever before, managing product complexity has become a critical challenge. Tune in with Dan Joe Barry, Vice President of Product Marketing at Configit, who explores how companies are tackling the growing number of product configurations across engineering, sales, manufacturing, and service. He explains how Configuration Lifecycle Management (CLM) helps organizations maintain a single source of truth for configuration data. The result: fewer errors, faster quoting, and the ability to deliver customized products at scale.